白夜 Tira

@HakuyaTira

Followers

3,644

Following

202

Media

34

Statuses

382

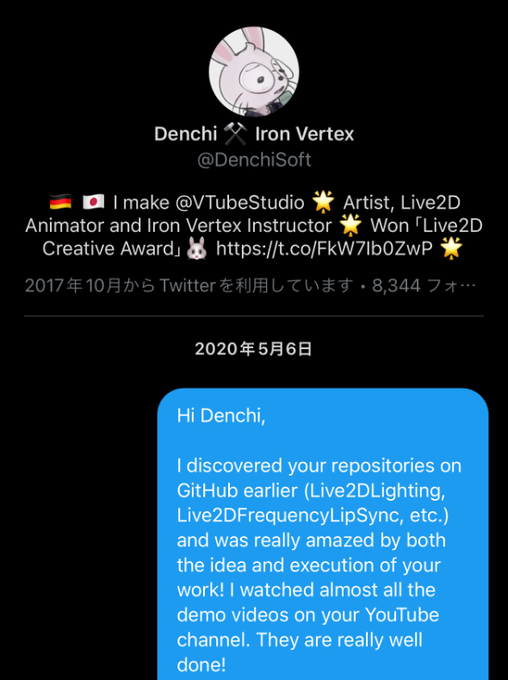

Your average white tiger hikikomori. Just call me Tira! I stream on Bilibili. 👇🏻 中文 / ENG OK! Working at @HakuyaLabs ママ: @ke02152 / 🎨: #TiraArt

Night City

Joined August 2022

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Islam

• 339569 Tweets

#ufc302

• 263817 Tweets

安田記念

• 167571 Tweets

Dustin

• 157261 Tweets

ML IN HONGKONG

• 70618 Tweets

ロマンチックウォリアー

• 68478 Tweets

ナミュール

• 38423 Tweets

#BINI_Mikha

• 35233 Tweets

BUILD BD 3GETHER

• 33203 Tweets

#BINIMikha_LadySetters

• 31746 Tweets

MIKHA OUR BEST PLAYER

• 31216 Tweets

ソウルラッシュ

• 28188 Tweets

ガイアフォース

• 24302 Tweets

#ユーフォ3期

• 23770 Tweets

土砂降り

• 22293 Tweets

セリフォス

• 19775 Tweets

ミエセス

• 13252 Tweets

ステラヴェローチェ

• 12657 Tweets

宝塚記念

• 12121 Tweets

GAME ON DONNY

• 11416 Tweets

どらほー

• 11362 Tweets

SaveOurSurroundingsID

• 10828 Tweets

ゲリラ豪雨

• 10529 Tweets

才木完封

• 10341 Tweets

才木くん

• 10261 Tweets

Last Seen Profiles

Pinned Tweet

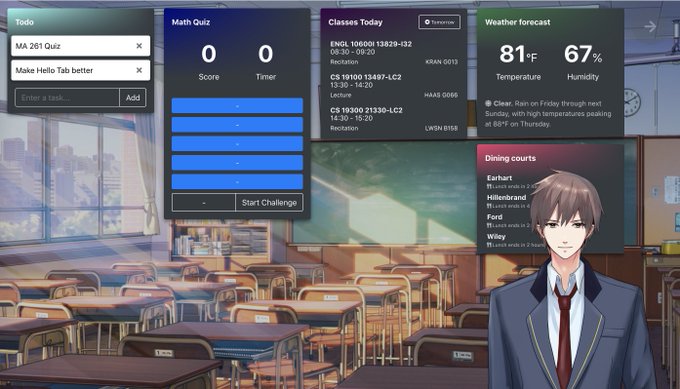

Let me introduce myself, I suppose?! My name is Hakuya Tira!⚡️I strike lightning at people... Just kidding. I'm a harmless white tiger hikikomori 🤗

🎨:

@ke02152

#TiraArt

#VTuber

#VTuberUprising

#ENVTuber

14

67

437

I'm gonna take the credit this time - I spent my entire Christmas break binge writing these 69 (lol) pages!!! 😭😭

10

32

476

Another important issue I rarely see people discuss: Live2D is a proprietary technology. If you have a 3D model you can use it pretty much everywhere without limitations; Live2D models only work in Live2D-licensed applications. Also…

8

20

319

People tend to think

#Warudo

is developed by some unknown, mysterious entity, but the entire

@hakuyalabs

is literally just me (dev) and

@YumemiyaYoyu

(everything not dev), and we're both VTubers for years! 😛 We just created the 3D VTubing app we wanted.

31

25

317

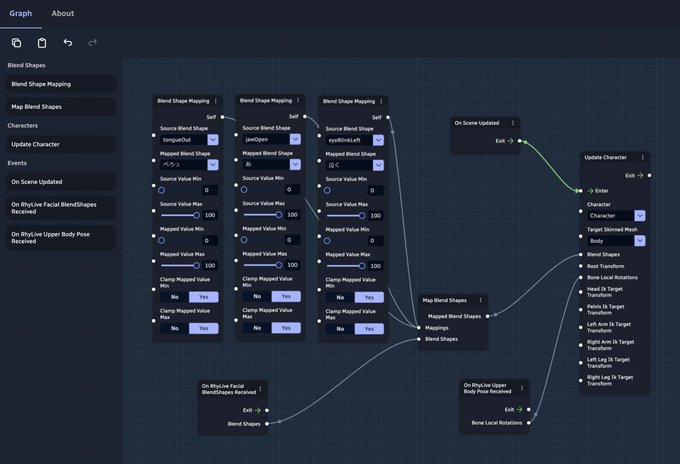

This is honestly super cool even from the developer’s perspective

This really shows what you can accomplish with only blueprints!! No scripting, just dragging nodes 🥹✋🏻

#WarudoPro

🥤Pirate Boy VTuber

#ShiratoriAsuta

from

#hOuOu

(Bilibili: 白鸟Asuta_Channel) 🏴☠️

✨Brings you a video full of rich interactive features, from his latest 3D debut livestream event!🎇

🕊️Come and enjoy these fun and engaging moments with Asuta and his seagull “Pine”!🍍

8

71

643

3

29

273

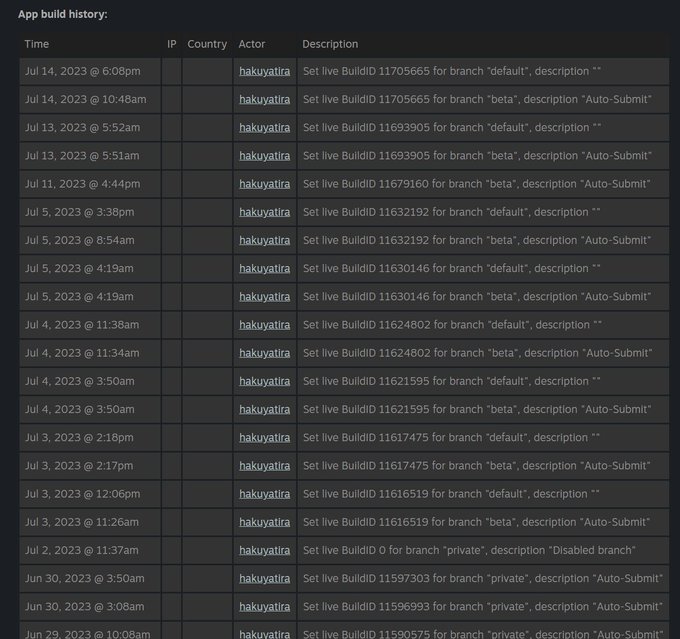

It's been 186 days since Warudo's EA launch.

Here I am sitting on a bus back home, haven't slept since yesterday to forcefully fix my terrible sleeping schedule, and scrolling

#Warudo

tag (as always) viewing your enthusiastic responses to the latest "Live2D" physics update... 🧵

28

25

264

🎄 My cover of "Merry Christmas, Mr. Lawrence" from last night's stream. 😊 Merry Christmas everyone!

(Hand tracking here is completely based on MIDI input!)

#Warudo

#VTuberUprising

8

22

162

Don't stare at me like that! I just haven't... Huff... exercised in quite a while... Huff... 🤢

🎨:

@DrawDrawDeimos

4

9

126

Jaw dropped. I fully appreciate all the technical challenges & novelties involved in this, but I think Live2D rigging is time-consuming & expensive enough, and it is much more difficult to make 2D models look 3D than to make 3D models look 2D

VTS Fullbody Tracking - plugin for

#VtubeStudio

It can track: shoulder, elbow, knuckles (thumb, index, pinky), wrist, hip, knee, ankle, heel, foot index

Try it and Join the Alpha !

Download & Informations on Github:

#VTSFullbodyTracking

#Live2D

#VTuber

63

662

3K

3

5

129

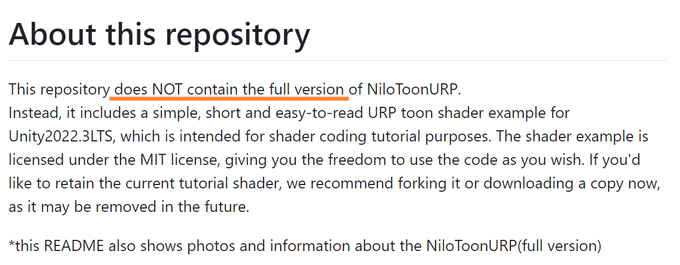

Some words on NiloToon and Warudo Pro:

@YumikoVT

Warudo dev here! Let me clarify a few things:

1. NiloToon is not free to use! The code you saw on GitHub is a "lite" version Colin created for educational purposes. All the fancy screenshots you are seeing do not come from the lite shader.

1

9

33

3

9

67

@Anime4dayss

Well 🫣

Don't stare at me like that! I just haven't... Huff... exercised in quite a while... Huff... 🤢

🎨:

@DrawDrawDeimos

4

9

126

1

2

57

Have a very exciting

#Warudo

idea but don’t have time to write it right now. Oh well. Let’s hope I can squeeze some time in April and show y’all a prototype… 😙

3

2

50

AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA

1

3

50

@Cimrai

Warudo dev here! Both programs are similar in a lot of ways. You can pretty much migrate any setup from one to another. So - it never hurts to try both! 😊

If you're going to try Warudo, check out the blueprints we shared that allow you to do this 👇

1

6

49

If a friend hasn't sent me this I would have missed this gorgeous fanart!! 😭 Thank you so much!! 🙇

AHHHHHHHH I'm so happy… 🥹

#TiraArt

1

2

45

For the sake of branding, I always used "we" when talking as

@hakuyalabs

, so it's hilarious someone thought we have a HR person running the Warudo account. That HR person is me!! 😭

Maybe I should just reply with this account more often...

1

0

33

@YumikoVT

Warudo dev here! Let me clarify a few things:

1. NiloToon is not free to use! The code you saw on GitHub is a "lite" version Colin created for educational purposes. All the fancy screenshots you are seeing do not come from the lite shader.

1

9

33

I don’t get why people get upset over this. Freelancing still requires responsibility - if you can’t deliver something, you shouldn’t get paid.

I’ve always trusted artists and paid full upfront, but that did result in two illustrations that never got delivered after 4 years. 🥲

0

2

32

@YumikoVT

3. Therefore, to make this more affordable, we actually partnered with NiloToon to offer a special VRM -> NiloToon service, which allows you to convert your model into NiloToon for use in Warudo Pro, *without* purchasing the NiloToon shader.

So it's quite the opposite!

3

0

28

😇This is me VVVVVVVVVV

👏WARUDO👏SHOWCASE👏

@HakuyaTira

's 3D reveal stream with Warudo! Yes he's the boss of our lab in case you're wondering 🥹

Model:

@night_0go

Video:

@fline_channel

BGM: never ender - livetune

#Vtuber

#vtubermodel

#modelreveal

#VTuberUprising

2

16

52

2

1

24

@YumikoVT

2. To use NiloToon, you usually have to purchase the NiloToon shader.

However, NiloToon is more often sold to companies (e.g., Hololive) for their internal Unity projects, not indie VTubers, so the cost can come off as really high if you just want NiloToon on your VTubing model.

1

0

21

@YumikoVT

Anyway, we haven't publicly mentioned about this anywhere, so I understand there's confusion. Hope this clears up things a bit! 😀

1

0

19

The times when Hoshi and ZeroSkyes answered questions faster & better than I could. The times when everyone proudly shows their best moments with Warudo in the

#showcase

channel. The time when I flew to Shanghai to supervise a VTuber concert using Warudo with optical tracking.

1

0

17

.

@AniLive_app

This is egregiously wrong. No, you do not need PSD/cmo3 files to animate a Live2D model, unless you don't use the Live2D SDK (which is literally impossible). I struggle to find a *single* reason why raw files are needed at the first place. Please explain.

@KiraOmori

Thanks for the mention! We definitely understand your concerns.

AniLive doesn’t use VTS, so we ask for the files to adapt models to our in-house software.

We use them for the sole purpose of importing + making avatars move on the app!

More info to follow w/o X character limits!

14

3

20

1

3

18

Oops now it’s even worse

@Cap039

@AniLive_app

heya! so from what I'm gathering ani live sends the whole model to the viewer? 🤔 sorry the more I learn about the app the more concerned I'm about it. I want to give you guys the benefit of the doubt but theres a lot of concerning stuff around

(source )

4

1

6

4

2

17

@gelisor

I have exactly experienced this - Live2D rigger ghosted me when I asked to purchase the original project files for doing some model upgrades, so I had to commission another rigger to redo the entire rigging. 😰

0

0

15

This! Seen a lot of Palworld discussions lately, blaming the devs and players for creating/playing a game filled with AI art - which is unproven and most likely not true (text-to-3D GenAI is bad & unusable right now). Whether it plagiarized Pokemon is a more interesting debate.

3

0

11

@melfinadarling

Basically put, you need Warudo Pro to render Warudo character mods that are configured with NiloToon by a modeler who owns NiloToon (ex:

@mofuworkshop

@llay11a

). If your modeler has NiloToon, you only need the Warudo Pro license. Otherwise, we can convert your model for you. 🙌🏻

2

4

11

@roxymanticore

Thank you for your kind support Roxy! 😄 Just to be clear though, I agree with other replies here that many users just find VSF/VNyan more intuitive. Warudo isn't for everyone, but I'm happy it works well for you!

0

1

9

@KiraOmori

@AniLive_app

Requiring the PSD file for checking missing assets is more BS. If you have the runtime file, you have all info you need to detect missing assets and prompt the user to upload a correct model.

Alpha stage is not a good excuse for breaching trust and giving non-answers like this.

1

0

7

@KiraOmori

@AniLive_app

I don’t buy this at all, frankly. This is like an app requesting front camera permissions because they want to check the user is a human. There are other non-invasive ways to resize and position a model. (1/2)

1

0

7